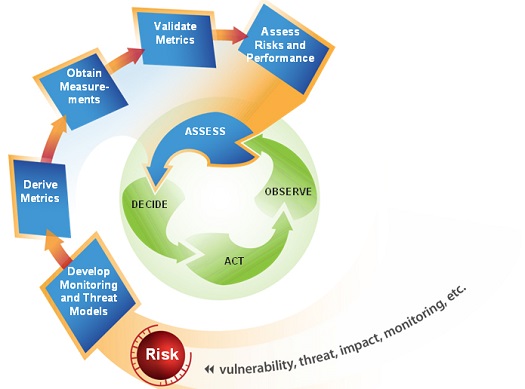

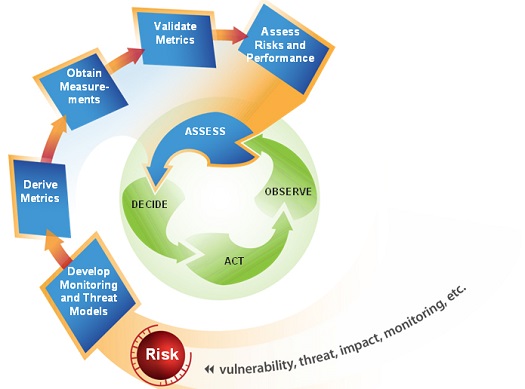

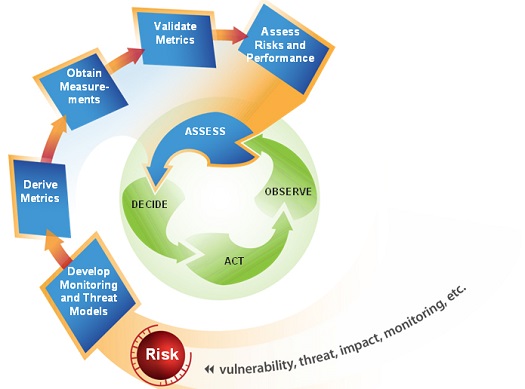

Army researchers seek ways to teach computers to identify and fix attacks on their own[/caption] The U.S. Army Research Laboratory has awarded as much as $48 million to researchers trying to build computer-security systems that can identify even the most subtle human-exploiting attacks and respond without human intervention. The project will focus on detecting specific opponents and types of attack online, measuring the risk of specific activities, and changing the security environment to block or minimize those threats with the least cost and trouble to the victim. The more difficult part of the research will be to develop models of human behavior that allow security systems decide, accurately and on their own, whether actions by humans are part of an attack (whether the humans involved realize it or not). The Army Research Lab (ARL) announced Oct. 8 a grant of $23.2 million to fund a five-year cooperative effort among a team of researchers at Penn State University, the University of California, Davis, Univ. California, Riverside and Indiana University. The five-year program comes with the option to extend it to 10 years with the addition of another $25 million in funding. The goal is to develop and evidence-based set of guidelines and analyses that would allow ARL security specialists to understand the security stance and vulnerability of Department of Defense computers, and build systems that would help mitigate them. "We're going to develop a new science of understanding how to make security-relevant decisions in cyberspace," according to Patrick D. McDaniel, professor of computer science and engineering at Penn State. "Essentially, we're looking to create predictive models that allow us to make real-time decisions that will lead to mission success." That means looking at how phone and online networks behave. As part of that, researchers will need to systematize the criteria and tools used for security analysis, making sure the code detects malicious intrusions rather than legitimate access, all while preserving enough data about any breach for later forensic analysis, according to Alexander Kott, associate director for science and technology at the U.S. Army Research Laboratory. Identifying whether the behavior of humans is malicious or not is difficult even for other humans, especially when it's not clear whether users who open a door to attackers knew what they were doing or, conversely, whether the "attackers" are perfectly legitimate and it's the security monitoring staff who are overreacting. Twenty-nine percent of attacks tracked in the April 23 2013 Verizon Data Breach Investigations Report could be traced to social-engineering or phishing tactics whose goal is to manipulate humans into giving attackers access to secured systems. Two-thirds of companies train users about phishing and social engineering, according to a July study from security company Rapid7, but half of the companies conducting the training admitted they weren't particularly effective. Seventy-six percent of network intrusions during 2012 used weak or stolen credentials, and two thirds of all breaches remained undetected for months after the initial penetration, according to the Verizon report. It's not possible to plug all the holes a human can create, but should be possible to identify many of them, as well as the situations in which it is likely a human's judgment was compromised – possibly before any real damage is done, according to Lorrie Cranor, associate professor of computer science, engineering and public policy at Carnegie Mellon University. "One of the salient aspects of our proposed research is in the realization that humans are integral to maintaining cybersecurity and to breaches of security,” she said. “Their behavior and cognitive and psychological biases have to be integrated as much as any other component of the system that one is trying to secure." Direct activity by humans can be difficult enough to control; mixed with the media through which their communications travel makes it even more difficult to identify the path of an exploit or attack – another of the areas on which the team – consisting of 17 researchers and 30 graduate students in all – will work. "We will focus on unique ways in which communication networks interact with social networks and information networks," according to Thomas LaPorta, a professor of computer science and engineering at Penn State. "For example, we can all see how new ways of building social networks through services such as Facebook impact how communication networks like the Internet and cellular phone networks are used. Ultimately we will be able control the behavior of communication networks in a way that allows people to exchange the most important information." Image: U.S. Army Research Laboratory

Army researchers seek ways to teach computers to identify and fix attacks on their own[/caption] The U.S. Army Research Laboratory has awarded as much as $48 million to researchers trying to build computer-security systems that can identify even the most subtle human-exploiting attacks and respond without human intervention. The project will focus on detecting specific opponents and types of attack online, measuring the risk of specific activities, and changing the security environment to block or minimize those threats with the least cost and trouble to the victim. The more difficult part of the research will be to develop models of human behavior that allow security systems decide, accurately and on their own, whether actions by humans are part of an attack (whether the humans involved realize it or not). The Army Research Lab (ARL) announced Oct. 8 a grant of $23.2 million to fund a five-year cooperative effort among a team of researchers at Penn State University, the University of California, Davis, Univ. California, Riverside and Indiana University. The five-year program comes with the option to extend it to 10 years with the addition of another $25 million in funding. The goal is to develop and evidence-based set of guidelines and analyses that would allow ARL security specialists to understand the security stance and vulnerability of Department of Defense computers, and build systems that would help mitigate them. "We're going to develop a new science of understanding how to make security-relevant decisions in cyberspace," according to Patrick D. McDaniel, professor of computer science and engineering at Penn State. "Essentially, we're looking to create predictive models that allow us to make real-time decisions that will lead to mission success." That means looking at how phone and online networks behave. As part of that, researchers will need to systematize the criteria and tools used for security analysis, making sure the code detects malicious intrusions rather than legitimate access, all while preserving enough data about any breach for later forensic analysis, according to Alexander Kott, associate director for science and technology at the U.S. Army Research Laboratory. Identifying whether the behavior of humans is malicious or not is difficult even for other humans, especially when it's not clear whether users who open a door to attackers knew what they were doing or, conversely, whether the "attackers" are perfectly legitimate and it's the security monitoring staff who are overreacting. Twenty-nine percent of attacks tracked in the April 23 2013 Verizon Data Breach Investigations Report could be traced to social-engineering or phishing tactics whose goal is to manipulate humans into giving attackers access to secured systems. Two-thirds of companies train users about phishing and social engineering, according to a July study from security company Rapid7, but half of the companies conducting the training admitted they weren't particularly effective. Seventy-six percent of network intrusions during 2012 used weak or stolen credentials, and two thirds of all breaches remained undetected for months after the initial penetration, according to the Verizon report. It's not possible to plug all the holes a human can create, but should be possible to identify many of them, as well as the situations in which it is likely a human's judgment was compromised – possibly before any real damage is done, according to Lorrie Cranor, associate professor of computer science, engineering and public policy at Carnegie Mellon University. "One of the salient aspects of our proposed research is in the realization that humans are integral to maintaining cybersecurity and to breaches of security,” she said. “Their behavior and cognitive and psychological biases have to be integrated as much as any other component of the system that one is trying to secure." Direct activity by humans can be difficult enough to control; mixed with the media through which their communications travel makes it even more difficult to identify the path of an exploit or attack – another of the areas on which the team – consisting of 17 researchers and 30 graduate students in all – will work. "We will focus on unique ways in which communication networks interact with social networks and information networks," according to Thomas LaPorta, a professor of computer science and engineering at Penn State. "For example, we can all see how new ways of building social networks through services such as Facebook impact how communication networks like the Internet and cellular phone networks are used. Ultimately we will be able control the behavior of communication networks in a way that allows people to exchange the most important information." Image: U.S. Army Research Laboratory Army Wants Computer That Defends Against Human-Exploit Attacks

[caption id="attachment_13027" align="aligncenter" width="525"] Army researchers seek ways to teach computers to identify and fix attacks on their own[/caption] The U.S. Army Research Laboratory has awarded as much as $48 million to researchers trying to build computer-security systems that can identify even the most subtle human-exploiting attacks and respond without human intervention. The project will focus on detecting specific opponents and types of attack online, measuring the risk of specific activities, and changing the security environment to block or minimize those threats with the least cost and trouble to the victim. The more difficult part of the research will be to develop models of human behavior that allow security systems decide, accurately and on their own, whether actions by humans are part of an attack (whether the humans involved realize it or not). The Army Research Lab (ARL) announced Oct. 8 a grant of $23.2 million to fund a five-year cooperative effort among a team of researchers at Penn State University, the University of California, Davis, Univ. California, Riverside and Indiana University. The five-year program comes with the option to extend it to 10 years with the addition of another $25 million in funding. The goal is to develop and evidence-based set of guidelines and analyses that would allow ARL security specialists to understand the security stance and vulnerability of Department of Defense computers, and build systems that would help mitigate them. "We're going to develop a new science of understanding how to make security-relevant decisions in cyberspace," according to Patrick D. McDaniel, professor of computer science and engineering at Penn State. "Essentially, we're looking to create predictive models that allow us to make real-time decisions that will lead to mission success." That means looking at how phone and online networks behave. As part of that, researchers will need to systematize the criteria and tools used for security analysis, making sure the code detects malicious intrusions rather than legitimate access, all while preserving enough data about any breach for later forensic analysis, according to Alexander Kott, associate director for science and technology at the U.S. Army Research Laboratory. Identifying whether the behavior of humans is malicious or not is difficult even for other humans, especially when it's not clear whether users who open a door to attackers knew what they were doing or, conversely, whether the "attackers" are perfectly legitimate and it's the security monitoring staff who are overreacting. Twenty-nine percent of attacks tracked in the April 23 2013 Verizon Data Breach Investigations Report could be traced to social-engineering or phishing tactics whose goal is to manipulate humans into giving attackers access to secured systems. Two-thirds of companies train users about phishing and social engineering, according to a July study from security company Rapid7, but half of the companies conducting the training admitted they weren't particularly effective. Seventy-six percent of network intrusions during 2012 used weak or stolen credentials, and two thirds of all breaches remained undetected for months after the initial penetration, according to the Verizon report. It's not possible to plug all the holes a human can create, but should be possible to identify many of them, as well as the situations in which it is likely a human's judgment was compromised – possibly before any real damage is done, according to Lorrie Cranor, associate professor of computer science, engineering and public policy at Carnegie Mellon University. "One of the salient aspects of our proposed research is in the realization that humans are integral to maintaining cybersecurity and to breaches of security,” she said. “Their behavior and cognitive and psychological biases have to be integrated as much as any other component of the system that one is trying to secure." Direct activity by humans can be difficult enough to control; mixed with the media through which their communications travel makes it even more difficult to identify the path of an exploit or attack – another of the areas on which the team – consisting of 17 researchers and 30 graduate students in all – will work. "We will focus on unique ways in which communication networks interact with social networks and information networks," according to Thomas LaPorta, a professor of computer science and engineering at Penn State. "For example, we can all see how new ways of building social networks through services such as Facebook impact how communication networks like the Internet and cellular phone networks are used. Ultimately we will be able control the behavior of communication networks in a way that allows people to exchange the most important information." Image: U.S. Army Research Laboratory

Army researchers seek ways to teach computers to identify and fix attacks on their own[/caption] The U.S. Army Research Laboratory has awarded as much as $48 million to researchers trying to build computer-security systems that can identify even the most subtle human-exploiting attacks and respond without human intervention. The project will focus on detecting specific opponents and types of attack online, measuring the risk of specific activities, and changing the security environment to block or minimize those threats with the least cost and trouble to the victim. The more difficult part of the research will be to develop models of human behavior that allow security systems decide, accurately and on their own, whether actions by humans are part of an attack (whether the humans involved realize it or not). The Army Research Lab (ARL) announced Oct. 8 a grant of $23.2 million to fund a five-year cooperative effort among a team of researchers at Penn State University, the University of California, Davis, Univ. California, Riverside and Indiana University. The five-year program comes with the option to extend it to 10 years with the addition of another $25 million in funding. The goal is to develop and evidence-based set of guidelines and analyses that would allow ARL security specialists to understand the security stance and vulnerability of Department of Defense computers, and build systems that would help mitigate them. "We're going to develop a new science of understanding how to make security-relevant decisions in cyberspace," according to Patrick D. McDaniel, professor of computer science and engineering at Penn State. "Essentially, we're looking to create predictive models that allow us to make real-time decisions that will lead to mission success." That means looking at how phone and online networks behave. As part of that, researchers will need to systematize the criteria and tools used for security analysis, making sure the code detects malicious intrusions rather than legitimate access, all while preserving enough data about any breach for later forensic analysis, according to Alexander Kott, associate director for science and technology at the U.S. Army Research Laboratory. Identifying whether the behavior of humans is malicious or not is difficult even for other humans, especially when it's not clear whether users who open a door to attackers knew what they were doing or, conversely, whether the "attackers" are perfectly legitimate and it's the security monitoring staff who are overreacting. Twenty-nine percent of attacks tracked in the April 23 2013 Verizon Data Breach Investigations Report could be traced to social-engineering or phishing tactics whose goal is to manipulate humans into giving attackers access to secured systems. Two-thirds of companies train users about phishing and social engineering, according to a July study from security company Rapid7, but half of the companies conducting the training admitted they weren't particularly effective. Seventy-six percent of network intrusions during 2012 used weak or stolen credentials, and two thirds of all breaches remained undetected for months after the initial penetration, according to the Verizon report. It's not possible to plug all the holes a human can create, but should be possible to identify many of them, as well as the situations in which it is likely a human's judgment was compromised – possibly before any real damage is done, according to Lorrie Cranor, associate professor of computer science, engineering and public policy at Carnegie Mellon University. "One of the salient aspects of our proposed research is in the realization that humans are integral to maintaining cybersecurity and to breaches of security,” she said. “Their behavior and cognitive and psychological biases have to be integrated as much as any other component of the system that one is trying to secure." Direct activity by humans can be difficult enough to control; mixed with the media through which their communications travel makes it even more difficult to identify the path of an exploit or attack – another of the areas on which the team – consisting of 17 researchers and 30 graduate students in all – will work. "We will focus on unique ways in which communication networks interact with social networks and information networks," according to Thomas LaPorta, a professor of computer science and engineering at Penn State. "For example, we can all see how new ways of building social networks through services such as Facebook impact how communication networks like the Internet and cellular phone networks are used. Ultimately we will be able control the behavior of communication networks in a way that allows people to exchange the most important information." Image: U.S. Army Research Laboratory

Army researchers seek ways to teach computers to identify and fix attacks on their own[/caption] The U.S. Army Research Laboratory has awarded as much as $48 million to researchers trying to build computer-security systems that can identify even the most subtle human-exploiting attacks and respond without human intervention. The project will focus on detecting specific opponents and types of attack online, measuring the risk of specific activities, and changing the security environment to block or minimize those threats with the least cost and trouble to the victim. The more difficult part of the research will be to develop models of human behavior that allow security systems decide, accurately and on their own, whether actions by humans are part of an attack (whether the humans involved realize it or not). The Army Research Lab (ARL) announced Oct. 8 a grant of $23.2 million to fund a five-year cooperative effort among a team of researchers at Penn State University, the University of California, Davis, Univ. California, Riverside and Indiana University. The five-year program comes with the option to extend it to 10 years with the addition of another $25 million in funding. The goal is to develop and evidence-based set of guidelines and analyses that would allow ARL security specialists to understand the security stance and vulnerability of Department of Defense computers, and build systems that would help mitigate them. "We're going to develop a new science of understanding how to make security-relevant decisions in cyberspace," according to Patrick D. McDaniel, professor of computer science and engineering at Penn State. "Essentially, we're looking to create predictive models that allow us to make real-time decisions that will lead to mission success." That means looking at how phone and online networks behave. As part of that, researchers will need to systematize the criteria and tools used for security analysis, making sure the code detects malicious intrusions rather than legitimate access, all while preserving enough data about any breach for later forensic analysis, according to Alexander Kott, associate director for science and technology at the U.S. Army Research Laboratory. Identifying whether the behavior of humans is malicious or not is difficult even for other humans, especially when it's not clear whether users who open a door to attackers knew what they were doing or, conversely, whether the "attackers" are perfectly legitimate and it's the security monitoring staff who are overreacting. Twenty-nine percent of attacks tracked in the April 23 2013 Verizon Data Breach Investigations Report could be traced to social-engineering or phishing tactics whose goal is to manipulate humans into giving attackers access to secured systems. Two-thirds of companies train users about phishing and social engineering, according to a July study from security company Rapid7, but half of the companies conducting the training admitted they weren't particularly effective. Seventy-six percent of network intrusions during 2012 used weak or stolen credentials, and two thirds of all breaches remained undetected for months after the initial penetration, according to the Verizon report. It's not possible to plug all the holes a human can create, but should be possible to identify many of them, as well as the situations in which it is likely a human's judgment was compromised – possibly before any real damage is done, according to Lorrie Cranor, associate professor of computer science, engineering and public policy at Carnegie Mellon University. "One of the salient aspects of our proposed research is in the realization that humans are integral to maintaining cybersecurity and to breaches of security,” she said. “Their behavior and cognitive and psychological biases have to be integrated as much as any other component of the system that one is trying to secure." Direct activity by humans can be difficult enough to control; mixed with the media through which their communications travel makes it even more difficult to identify the path of an exploit or attack – another of the areas on which the team – consisting of 17 researchers and 30 graduate students in all – will work. "We will focus on unique ways in which communication networks interact with social networks and information networks," according to Thomas LaPorta, a professor of computer science and engineering at Penn State. "For example, we can all see how new ways of building social networks through services such as Facebook impact how communication networks like the Internet and cellular phone networks are used. Ultimately we will be able control the behavior of communication networks in a way that allows people to exchange the most important information." Image: U.S. Army Research Laboratory

Army researchers seek ways to teach computers to identify and fix attacks on their own[/caption] The U.S. Army Research Laboratory has awarded as much as $48 million to researchers trying to build computer-security systems that can identify even the most subtle human-exploiting attacks and respond without human intervention. The project will focus on detecting specific opponents and types of attack online, measuring the risk of specific activities, and changing the security environment to block or minimize those threats with the least cost and trouble to the victim. The more difficult part of the research will be to develop models of human behavior that allow security systems decide, accurately and on their own, whether actions by humans are part of an attack (whether the humans involved realize it or not). The Army Research Lab (ARL) announced Oct. 8 a grant of $23.2 million to fund a five-year cooperative effort among a team of researchers at Penn State University, the University of California, Davis, Univ. California, Riverside and Indiana University. The five-year program comes with the option to extend it to 10 years with the addition of another $25 million in funding. The goal is to develop and evidence-based set of guidelines and analyses that would allow ARL security specialists to understand the security stance and vulnerability of Department of Defense computers, and build systems that would help mitigate them. "We're going to develop a new science of understanding how to make security-relevant decisions in cyberspace," according to Patrick D. McDaniel, professor of computer science and engineering at Penn State. "Essentially, we're looking to create predictive models that allow us to make real-time decisions that will lead to mission success." That means looking at how phone and online networks behave. As part of that, researchers will need to systematize the criteria and tools used for security analysis, making sure the code detects malicious intrusions rather than legitimate access, all while preserving enough data about any breach for later forensic analysis, according to Alexander Kott, associate director for science and technology at the U.S. Army Research Laboratory. Identifying whether the behavior of humans is malicious or not is difficult even for other humans, especially when it's not clear whether users who open a door to attackers knew what they were doing or, conversely, whether the "attackers" are perfectly legitimate and it's the security monitoring staff who are overreacting. Twenty-nine percent of attacks tracked in the April 23 2013 Verizon Data Breach Investigations Report could be traced to social-engineering or phishing tactics whose goal is to manipulate humans into giving attackers access to secured systems. Two-thirds of companies train users about phishing and social engineering, according to a July study from security company Rapid7, but half of the companies conducting the training admitted they weren't particularly effective. Seventy-six percent of network intrusions during 2012 used weak or stolen credentials, and two thirds of all breaches remained undetected for months after the initial penetration, according to the Verizon report. It's not possible to plug all the holes a human can create, but should be possible to identify many of them, as well as the situations in which it is likely a human's judgment was compromised – possibly before any real damage is done, according to Lorrie Cranor, associate professor of computer science, engineering and public policy at Carnegie Mellon University. "One of the salient aspects of our proposed research is in the realization that humans are integral to maintaining cybersecurity and to breaches of security,” she said. “Their behavior and cognitive and psychological biases have to be integrated as much as any other component of the system that one is trying to secure." Direct activity by humans can be difficult enough to control; mixed with the media through which their communications travel makes it even more difficult to identify the path of an exploit or attack – another of the areas on which the team – consisting of 17 researchers and 30 graduate students in all – will work. "We will focus on unique ways in which communication networks interact with social networks and information networks," according to Thomas LaPorta, a professor of computer science and engineering at Penn State. "For example, we can all see how new ways of building social networks through services such as Facebook impact how communication networks like the Internet and cellular phone networks are used. Ultimately we will be able control the behavior of communication networks in a way that allows people to exchange the most important information." Image: U.S. Army Research Laboratory