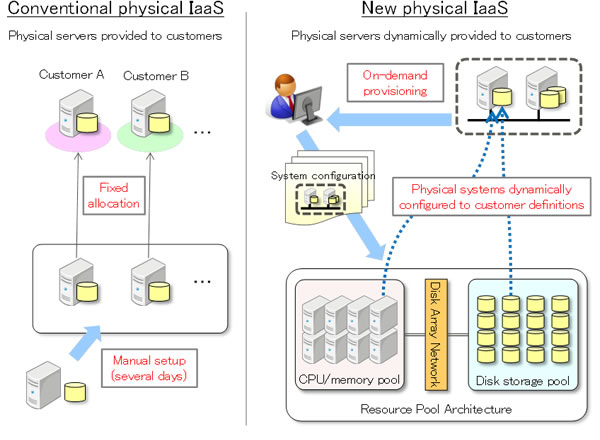

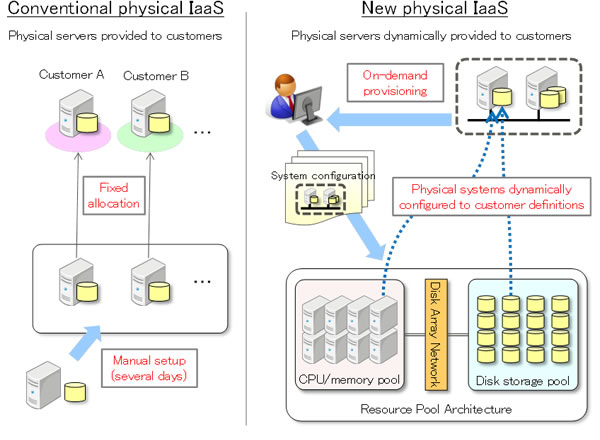

Renting a chunk of public cloud for sensitive corporate data or applications isn't an option for security-conscious or heavily regulated companies. Private clouds made up of company-purchased servers maintained at a colo facility by a hosting provider avoids the need to share hardware, but loses the dynamic capacity that’s one of the cloud's best-selling points. Researchers at Fujitsu Labs have split the difference by building a big server that can serve many customers, configuring so that each customer can call dibs on specific CPUs, disk-storage systems and other resources necessary to run a server. A layer of middleware and the configuration interface are responsible for keeping the hardware modules separated while also pooling them so they can share the same power supplies and server rack. One major advantage, according to Fujitsu, is that the half-private/half-public server allows customers hosting private clouds in a colo facility to get the same kind of on-demand capacity increases available on multi-tenant systems. Customers who would normally have to wait days for the colo provider to acquire, configure and install a new physical server, can get one in about 10 minutes by having the provider configure physical computing resources into a virtual-physical server that can be inserted easily in that customer's server farm. Fujitsu calls the base technology Resource Pool Architecture, and describes it in ways that make it sound like any other cloud platform. But rather than relying on encryption and virtual servers to separate one customer's workload from another, Fujitsu's platform is built on a rack-sized system crammed with dozens of CPUs, disk storage and other resources, connected via a high-speed interconnect. Calling it "physical IaaS" may be confusing, and even a little inaccurate, but calling it a shared server wouldn't be accurate, either. The RPA server isn't as dedicated to a single customer as a standalone physical server that never touches the data, backplane or power supply of a stranger's hardware. The ability to dedicate a CPU, disk storage and memory to a single customer isolates that workload to a certain extent, even though the whole system has to share the same high-speed interconnect—which might allow for some bumping of elbows among data belonging to different customers. The modules dedicated to one customer won't run more slowly if all the others are working for others, as would be the case if workloads were separated only by the virtual machines within which they run. A prototype system was able to run 48 servers and 512 hard-disk and solid-state drives on a single system and allow new servers to be configured in as little as 10 minutes, according to Fujitsu. The system also gives customers control over the physical modules within the system and can configure them at will to match the needs of particular workloads. The systems are not yet available publicly. Fujitsu is scheduled to begin testing them internally this month. It did not announce any information about when the systems might be publicly available or how much they would cost. Image: Fujitsu

Renting a chunk of public cloud for sensitive corporate data or applications isn't an option for security-conscious or heavily regulated companies. Private clouds made up of company-purchased servers maintained at a colo facility by a hosting provider avoids the need to share hardware, but loses the dynamic capacity that’s one of the cloud's best-selling points. Researchers at Fujitsu Labs have split the difference by building a big server that can serve many customers, configuring so that each customer can call dibs on specific CPUs, disk-storage systems and other resources necessary to run a server. A layer of middleware and the configuration interface are responsible for keeping the hardware modules separated while also pooling them so they can share the same power supplies and server rack. One major advantage, according to Fujitsu, is that the half-private/half-public server allows customers hosting private clouds in a colo facility to get the same kind of on-demand capacity increases available on multi-tenant systems. Customers who would normally have to wait days for the colo provider to acquire, configure and install a new physical server, can get one in about 10 minutes by having the provider configure physical computing resources into a virtual-physical server that can be inserted easily in that customer's server farm. Fujitsu calls the base technology Resource Pool Architecture, and describes it in ways that make it sound like any other cloud platform. But rather than relying on encryption and virtual servers to separate one customer's workload from another, Fujitsu's platform is built on a rack-sized system crammed with dozens of CPUs, disk storage and other resources, connected via a high-speed interconnect. Calling it "physical IaaS" may be confusing, and even a little inaccurate, but calling it a shared server wouldn't be accurate, either. The RPA server isn't as dedicated to a single customer as a standalone physical server that never touches the data, backplane or power supply of a stranger's hardware. The ability to dedicate a CPU, disk storage and memory to a single customer isolates that workload to a certain extent, even though the whole system has to share the same high-speed interconnect—which might allow for some bumping of elbows among data belonging to different customers. The modules dedicated to one customer won't run more slowly if all the others are working for others, as would be the case if workloads were separated only by the virtual machines within which they run. A prototype system was able to run 48 servers and 512 hard-disk and solid-state drives on a single system and allow new servers to be configured in as little as 10 minutes, according to Fujitsu. The system also gives customers control over the physical modules within the system and can configure them at will to match the needs of particular workloads. The systems are not yet available publicly. Fujitsu is scheduled to begin testing them internally this month. It did not announce any information about when the systems might be publicly available or how much they would cost. Image: Fujitsu Fujitsu Intros Configurable Physical-Virtual Server

Renting a chunk of public cloud for sensitive corporate data or applications isn't an option for security-conscious or heavily regulated companies. Private clouds made up of company-purchased servers maintained at a colo facility by a hosting provider avoids the need to share hardware, but loses the dynamic capacity that’s one of the cloud's best-selling points. Researchers at Fujitsu Labs have split the difference by building a big server that can serve many customers, configuring so that each customer can call dibs on specific CPUs, disk-storage systems and other resources necessary to run a server. A layer of middleware and the configuration interface are responsible for keeping the hardware modules separated while also pooling them so they can share the same power supplies and server rack. One major advantage, according to Fujitsu, is that the half-private/half-public server allows customers hosting private clouds in a colo facility to get the same kind of on-demand capacity increases available on multi-tenant systems. Customers who would normally have to wait days for the colo provider to acquire, configure and install a new physical server, can get one in about 10 minutes by having the provider configure physical computing resources into a virtual-physical server that can be inserted easily in that customer's server farm. Fujitsu calls the base technology Resource Pool Architecture, and describes it in ways that make it sound like any other cloud platform. But rather than relying on encryption and virtual servers to separate one customer's workload from another, Fujitsu's platform is built on a rack-sized system crammed with dozens of CPUs, disk storage and other resources, connected via a high-speed interconnect. Calling it "physical IaaS" may be confusing, and even a little inaccurate, but calling it a shared server wouldn't be accurate, either. The RPA server isn't as dedicated to a single customer as a standalone physical server that never touches the data, backplane or power supply of a stranger's hardware. The ability to dedicate a CPU, disk storage and memory to a single customer isolates that workload to a certain extent, even though the whole system has to share the same high-speed interconnect—which might allow for some bumping of elbows among data belonging to different customers. The modules dedicated to one customer won't run more slowly if all the others are working for others, as would be the case if workloads were separated only by the virtual machines within which they run. A prototype system was able to run 48 servers and 512 hard-disk and solid-state drives on a single system and allow new servers to be configured in as little as 10 minutes, according to Fujitsu. The system also gives customers control over the physical modules within the system and can configure them at will to match the needs of particular workloads. The systems are not yet available publicly. Fujitsu is scheduled to begin testing them internally this month. It did not announce any information about when the systems might be publicly available or how much they would cost. Image: Fujitsu

Renting a chunk of public cloud for sensitive corporate data or applications isn't an option for security-conscious or heavily regulated companies. Private clouds made up of company-purchased servers maintained at a colo facility by a hosting provider avoids the need to share hardware, but loses the dynamic capacity that’s one of the cloud's best-selling points. Researchers at Fujitsu Labs have split the difference by building a big server that can serve many customers, configuring so that each customer can call dibs on specific CPUs, disk-storage systems and other resources necessary to run a server. A layer of middleware and the configuration interface are responsible for keeping the hardware modules separated while also pooling them so they can share the same power supplies and server rack. One major advantage, according to Fujitsu, is that the half-private/half-public server allows customers hosting private clouds in a colo facility to get the same kind of on-demand capacity increases available on multi-tenant systems. Customers who would normally have to wait days for the colo provider to acquire, configure and install a new physical server, can get one in about 10 minutes by having the provider configure physical computing resources into a virtual-physical server that can be inserted easily in that customer's server farm. Fujitsu calls the base technology Resource Pool Architecture, and describes it in ways that make it sound like any other cloud platform. But rather than relying on encryption and virtual servers to separate one customer's workload from another, Fujitsu's platform is built on a rack-sized system crammed with dozens of CPUs, disk storage and other resources, connected via a high-speed interconnect. Calling it "physical IaaS" may be confusing, and even a little inaccurate, but calling it a shared server wouldn't be accurate, either. The RPA server isn't as dedicated to a single customer as a standalone physical server that never touches the data, backplane or power supply of a stranger's hardware. The ability to dedicate a CPU, disk storage and memory to a single customer isolates that workload to a certain extent, even though the whole system has to share the same high-speed interconnect—which might allow for some bumping of elbows among data belonging to different customers. The modules dedicated to one customer won't run more slowly if all the others are working for others, as would be the case if workloads were separated only by the virtual machines within which they run. A prototype system was able to run 48 servers and 512 hard-disk and solid-state drives on a single system and allow new servers to be configured in as little as 10 minutes, according to Fujitsu. The system also gives customers control over the physical modules within the system and can configure them at will to match the needs of particular workloads. The systems are not yet available publicly. Fujitsu is scheduled to begin testing them internally this month. It did not announce any information about when the systems might be publicly available or how much they would cost. Image: Fujitsu