Last week,

Facebook announced Graph Search, a system for searching the social network’s vast collection of users, photos, and “Liked” interests. But how will Facebook power it? The Disaggregated Rack, according to a presentation a Facebook executive made last week at the Open Compute Summit in Santa Clara, Calif. As a global network, Facebook’s servers are constantly in use: more than a billion users add over 320 million photos per day (and a total of about 220 billion already stored), “Like” and comment 4.2 billion times daily, and establish 140 billion friend connections. According to Jason Taylor, director of capacity engineering and analysis at the company, 82 percent of those users come from outside of the United States. “One consequence of this global traffic, from users all over the globe, is that our servers are hot for about probably 14 to 16 hours in the day,” Taylor said. Europeans start accessing Facebook at around 8 PM PT, with that traffic reaches a peak at around 9 PM and stays high until about 4 AM, Taylor said. Between 10 AM and 4 PM PT is when East Coast traffic peaks, dipping down just as the Midwest comes online, before hitting another evening peak at about 7 PM. Facebook then dips down quite a bit for Asia, where the company’s user base is much smaller.

Facebook’s Current Infrastructure

Almost all of Facebook’s traffic comes through front-end Web clusters, each containing about 12,000 servers. Each rack contains between about 20 and 40 servers. For serving its Web pages (the company’s most important function), Facebook devotes around 250 racks, plus 30 racks of cache, 30 racks of ads, as well as some other, smaller functions. The cluster is designed so that there are no bottlenecks before the Web server, although it may call a back-end database or message server. “Everything in these clusters is designed to maximize the utilization of the Web CPU,” Taylor said. Racks of multi-feed servers power the middle column on Facebook’s page, or the Wall. Each multi-feed rack contains a complete copy of user activity for the last two days. All 40 servers in a rack work together, running two services: a leaf, which contains all the data and consumes virtually all of the RAM, and the aggregator, which consumes most of the CPU cycles. When a Web server needs to generate a user’s Wall, it will pick a random aggregator, generate the list of the user’s friends, and then query all of the leaves in parallel. Each of the leaves then responds with the friend’s most recent activity, and the aggregator ranks that data based on interests, the relationship with the friend, and provides the top 40 “stories” or posts to the Web tier. The cache is used for authentication, providing the text of the post, comments, and likes; memcache hits are on the order of 96 percent. Facebook has five standard types of servers: Web, database, Hadoop, the Haystack photo infrastructure, and the feed servers, differentiated by different hardware configurations. The majority of servers Facebook designs and purchases are designed for Web serving. Limiting the types of servers maximizes pricing competition among Facebook’s suppliers, allows the company to easily re-purpose servers, and makes them easy to service. The problem is lack of flexibility, especially as service needs change over time. Designers need to account for the changing relationships CPU and RAM needs, but also disk space, flash capacity, and IOPS. Some services are bound by just one constraint: at Facebook, Web servers are typically (only) CPU bound, so the solution is to buy more servers. But for problems with more than one variable, another answer was needed.

The Solution? Disaggregated Rack.

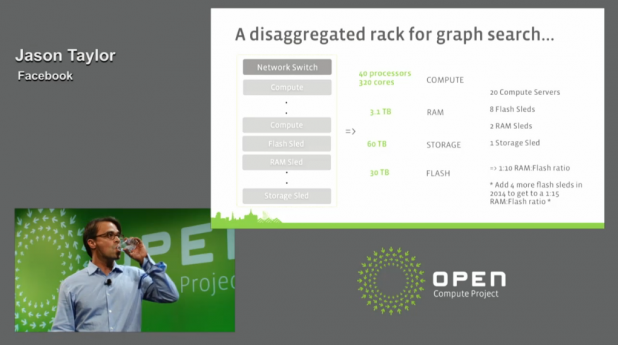

“We wanted to know, can we build more hardware in terms of services, and still do more in terms of serviceability and cost,” Taylor said. A multi-feed rack requires about 80 processors, 5.8 TB of RAM, 80 TB of storage, and 30 TB of flash. “We think what we can do is break up each of these resources and scale them completely independently of one another,” he added. “This is what we think we can do with Disaggregated Rack.” Facebook broke down the problem into several pieces.

- Compute: A server with 2 processors, 8 or 16 DIMM slots, no hard drive, a small flash boot partition, and a “big NIC” with plentiful throughput to enable network booting.

- RAM Sled: Facebook wants to replace the leaves and run it on a RAM sled with between 128 GB and 512 GB of memory, and for $500 to $700 per sled. Only a basic CPU would be needed. Total queries would be 450,000 to 1 million key queries per second.

- Storage: Facebook’s solution here is based on its Knox storage design (PDF). The I/O demands are low: 3,000 IOPS or so, Taylor said. But Facebook only wants to spend $500 or $700 apiece, excluding the cost of the drives.

- Flash Sled: Facebook would like between 500 Gbytes to 8 terabytes of flash, with 600,000 IOPS. Excluding flash costs, Facebook would like the solution to cost around $500 to $700 apiece.

To power Graph Search, Taylor said, Facebook anticipates using 20 compute servers, 8 flash sleds, 2 RAM sleds and a storage sled, for a total of 320 CPU cores, 3 terabytes of RAM, and 30 TB of flash. The critical ratio, Taylor added, is RAM to flash—or in this case, about 1:10. In a year from now, the ratio will climb to 1:15 ratio, to meet the efficiency gains that the Search team hopes to deliver, he added. “The problem is if we don’t have all that flexibility, we have to crack all the cases, open up the servers, pop in more PCI-E cards, pop in more SSDs, whatever,” he suggested. “The point is, this is a lot easier to do upgrades, and scale.” This is pretty much the opposite of virtualization, which aims to eliminate idle workloads and maximize efficiency. For Disaggregated Rack, Facebook is trying to push servers as hard as they possibly can go, while trying to trim the bottlenecks so that they match the application. Taylor didn’t provide a lot of details on the next steps, dubbing it a “project” in the same vein as the Open Vault storage spec the company talked about last year.

Image: Open Compute Webcast