Released just two years ago, Docker is an open platform for developers and sysadmins to build, deploy, and run distributed applications. For developers, it is a particularly handy way to create applications and services and launch them in seconds. (It also comes with a cute whale logo, above.) For now, Docker is primarily for Linux and Mac, although you can use it on Windows with VirtualBox. (There’s a project called Kitematic that will install and set up Virtualbox for you.) Docker uses containers, which manage computing resources such as CPU shares, network traffic and memory at the kernel level, so that processes and namespaces can use them without interfering with the rest of the system. (These processes could be lightweight Linux hosts, multiple Web servers and applications, or a database backend.) A container is smaller and lighter than a virtual machine and does not require the hypervisor needed to manage multiple virtual machines. Here are five reasons to consider using Docker for your next distributed-application project.

Blinding Speed

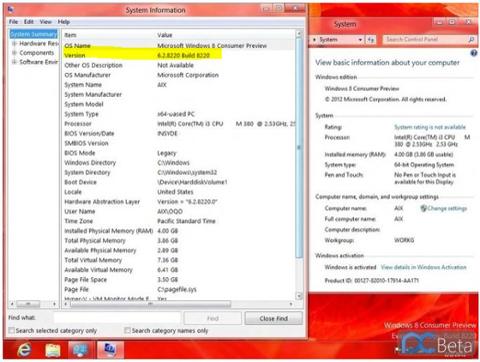

Perhaps you want to set up NGINX on an Ubuntu server, with some PostgreSQL thrown into the mix, and then use the result to run a website. On a normal server, it would probably take at least two hours to set everything up. Docker would realistically shave the time down to a couple of minutes. If you have several websites and each requires a different PHP/MySQL version, putting each in a container with the specific version of PHP and MySQL is easy to do. Installing Ubuntu 12:04 and echoing "Hello World" is as simple as this one line command: [shell] sudo docker run ubuntu:12:04 /bin/echo 'Hello World' [/shell] The first time you go through this process, as things set up, you get the following code (on subsequent runs, you’d just get the "Hello World"):

[sudo] password for david:

Unable to find image 'ubuntu:12.04' locally

12:04: Pulling from ubuntu

c50f7e0846e6: Pull complete

fd248b999044: Pull complete

9f91f850df24: Pull complete

ac6b0eaa3203: Already Exists

ubuntu: 12.04: The image you are pulling has been verified. Important: image verification is a tech preview feature and should not be relied on to provide security:

Digest: sha256: f616fbee0f43099a493a04540a915c26796d8d5a262a3e5f62752827d8eb970e4

Status: Downloaded newer image for ubuntu:12.04

Hello World

In a more typical scenario, you'd create a Dockerfile with instructions on how to set up the container with the application you need, fetch the latest version of your application from version control, build it and run it.

Big Companies Use It

For a project that's only a couple of years old, Docker's adoption by companies has been nothing short of remarkable. Not only do open-source firms have official Docker repositories, but tech giants such as Google and Microsoft have incorporated Docker into their cloud products. (Many of these companies are contributing code back to Docker.)

Isolation and Security

The big advantage of using containers over virtual machines is that only the binaries for the applications and support files need to be copied. (In a virtual machine, there also needs to be a copy of the guest operating system, which can prove substantial.) Containers on a server all share the same operating system, allowing Docker to incorporate many more containers per server. Don't underestimate the advantages: A typical server might have 10-100 virtual machines or 100-1000 containers. Each container has its own network stack, and applications in containers are isolated from other containers, meaning they can't affect them unless configured to communicate over specified network interfaces. The Docker daemon runs as root, so access to it should be restricted, but containers are quite secure by default. Running an application in a container sandboxes it, so if it's hacked, the hacker will be restricted with regard to access.

Packaging Applications Eases Deployment

Taking applications from development to production can be quite troublesome, and much time and resources have been spent trying to streamline things. There are all sorts of points in the process that can go wrong, particularly with configuration issues, and dependency on libraries, operating system and resources. Docker simplifies the whole procedure; applications, together with all their dependencies, can be bundled into a single container. In turn, that container can be placed on another machine running Docker, and run there without any compatibility issues.

Docker Now Uses LibContainer

Containerization has been around for well over a decade, but it's recently started attracting a lot of attention. Docker started with LxC (Linux Containers, which had been around for a few years and are used in Heroku). Docker switched to LibContainer, which is open source and features contributions from Red Hat, Google and Canonical. Given its open source origins, LibContainer can be used by anyone; companies with their own existing container technology have adopted this consistent API to avoid needing to choose one container tool kit over another.

Conclusion

Personally, Docker makes life easier for me as a developer. It's easier to test applications against different versions of operating systems, databases, and scripting languages. There are even Docker hosting services available, such as Tutum. If you need an in-browser tutorial, here’s one to get you going.