[caption id="attachment_142059" align="aligncenter" width="2482"]  CoreML WWDC 2017[/caption] Machine learning is not a fad or distraction for major tech companies. It's been in use for quite some time, although tech giants such as Google have only recently made a big show of publicizing their efforts in it. Apple is finally letting its developer group tap into it via the new CoreML framework. Here’s how. CoreML is, as you may guess by the name, dedicated to machine learning. It’s positioned to serve as the go-to framework for machine learning on Apple platforms such as iOS (that’s why Apple branded it with the ‘core’ moniker). Still officially in beta, CoreML is positioned as something that will be ready for use with iOS 11. An important note: users don’t need new hardware for an app to take advantage of CoreML; it’s a framework, and not reliant on any specific hardware or on-device functionality. The first thing developers will want to do is find a machine learning model. Apple doesn’t have any restrictions here, per se, but does offer up some widely used models as a sort of ‘best practices’ for CoreML. But all models touted by Apple hint at a fundamental problem with CoreML, as well. Each model is meant for scene detection, which is how apps can scan images for faces, trees or other objects. As Apple states:

CoreML WWDC 2017[/caption] Machine learning is not a fad or distraction for major tech companies. It's been in use for quite some time, although tech giants such as Google have only recently made a big show of publicizing their efforts in it. Apple is finally letting its developer group tap into it via the new CoreML framework. Here’s how. CoreML is, as you may guess by the name, dedicated to machine learning. It’s positioned to serve as the go-to framework for machine learning on Apple platforms such as iOS (that’s why Apple branded it with the ‘core’ moniker). Still officially in beta, CoreML is positioned as something that will be ready for use with iOS 11. An important note: users don’t need new hardware for an app to take advantage of CoreML; it’s a framework, and not reliant on any specific hardware or on-device functionality. The first thing developers will want to do is find a machine learning model. Apple doesn’t have any restrictions here, per se, but does offer up some widely used models as a sort of ‘best practices’ for CoreML. But all models touted by Apple hint at a fundamental problem with CoreML, as well. Each model is meant for scene detection, which is how apps can scan images for faces, trees or other objects. As Apple states:

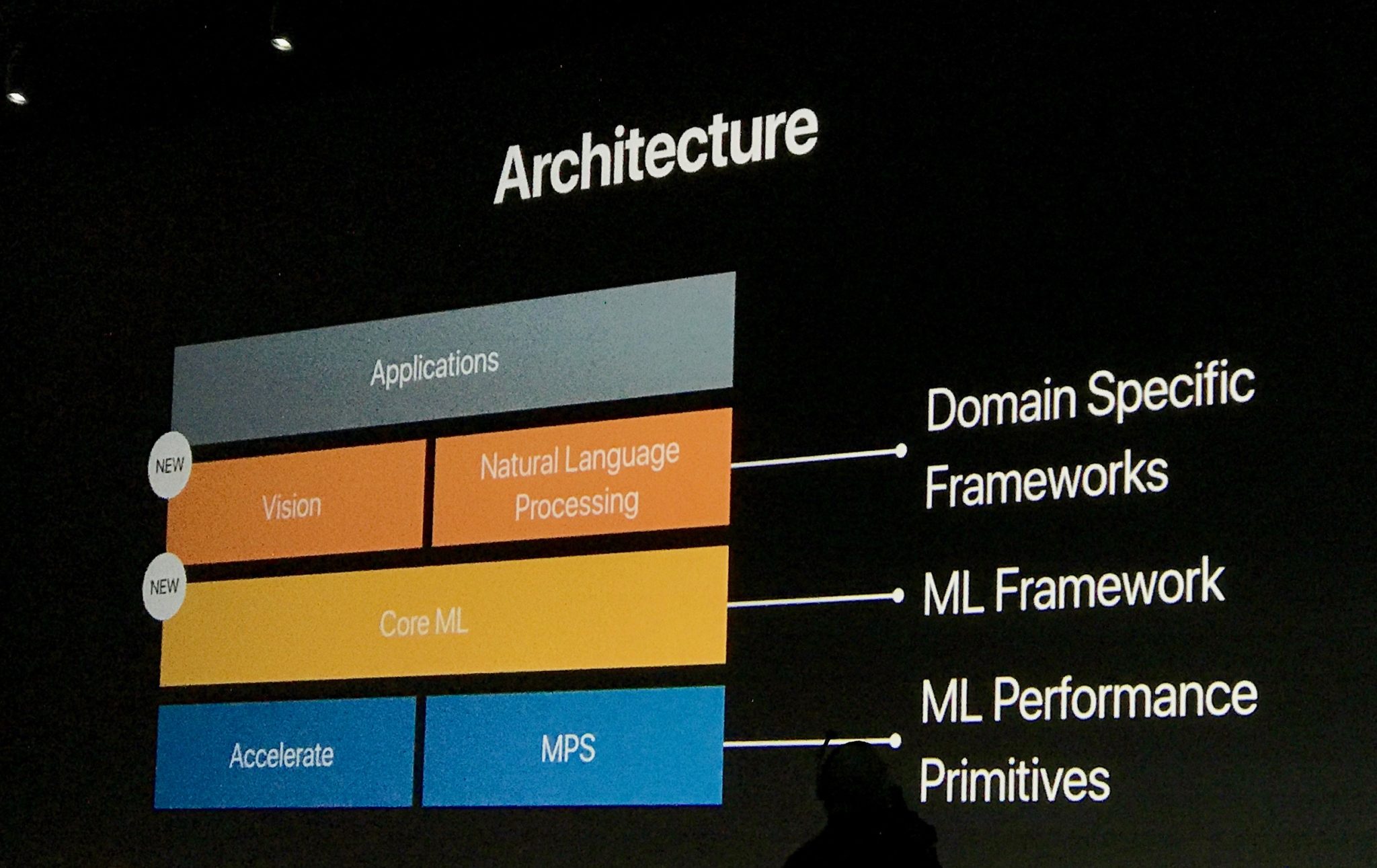

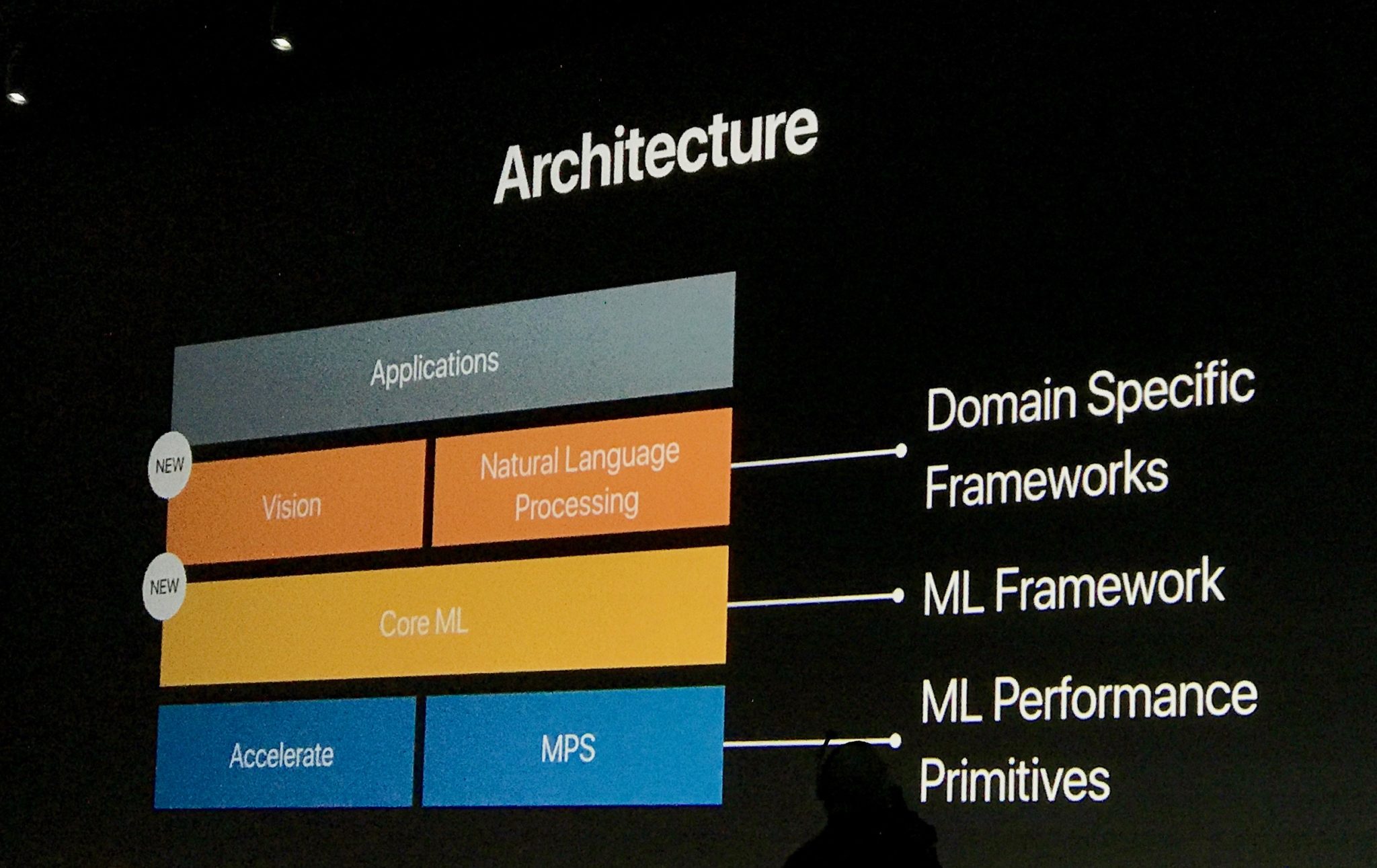

CoreML Architecture WWDC 2017[/caption] CoreML Vision is deep, and will be attractive for simple-purpose apps. Developers who try to corral the entirety of this framework will have cumbersome codebases to support. An example: Apple has five classes dedicated to object detection and tracking, two for horizon detection, and five supporting superclasses for Vision. It’s a lot to take in, and this is all before we convert the model. While pulling your model into the app’s code is necessary, Apple also asks developers to convert it to a CoreML-friendly framework. It has a fairly easy-to-use conversion for most well-known models, but developers who use something not officially recognized may need to create their own conversion tool. Apple notes that developers will be “defining each layer of the model's architecture and its connectivity with other layers,” but only says they should “use the conversion tools provided by Core ML Tools as examples.” There’s no strong guidance there. If you need to create a Vision app that’s a bit more robust, CoreML has an API. It’s meant for custom workflows and “advanced use cases.” Vision is only one aspect of CoreML, though. Speech and text also play a big role, which is where Foundation comes into play. Foundation may have a lot of moving parts, but its Strings and Text structs and classes make an appearance in CoreML. Most notably,

CoreML Architecture WWDC 2017[/caption] CoreML Vision is deep, and will be attractive for simple-purpose apps. Developers who try to corral the entirety of this framework will have cumbersome codebases to support. An example: Apple has five classes dedicated to object detection and tracking, two for horizon detection, and five supporting superclasses for Vision. It’s a lot to take in, and this is all before we convert the model. While pulling your model into the app’s code is necessary, Apple also asks developers to convert it to a CoreML-friendly framework. It has a fairly easy-to-use conversion for most well-known models, but developers who use something not officially recognized may need to create their own conversion tool. Apple notes that developers will be “defining each layer of the model's architecture and its connectivity with other layers,” but only says they should “use the conversion tools provided by Core ML Tools as examples.” There’s no strong guidance there. If you need to create a Vision app that’s a bit more robust, CoreML has an API. It’s meant for custom workflows and “advanced use cases.” Vision is only one aspect of CoreML, though. Speech and text also play a big role, which is where Foundation comes into play. Foundation may have a lot of moving parts, but its Strings and Text structs and classes make an appearance in CoreML. Most notably,

CoreML WWDC 2017[/caption] Machine learning is not a fad or distraction for major tech companies. It's been in use for quite some time, although tech giants such as Google have only recently made a big show of publicizing their efforts in it. Apple is finally letting its developer group tap into it via the new CoreML framework. Here’s how. CoreML is, as you may guess by the name, dedicated to machine learning. It’s positioned to serve as the go-to framework for machine learning on Apple platforms such as iOS (that’s why Apple branded it with the ‘core’ moniker). Still officially in beta, CoreML is positioned as something that will be ready for use with iOS 11. An important note: users don’t need new hardware for an app to take advantage of CoreML; it’s a framework, and not reliant on any specific hardware or on-device functionality. The first thing developers will want to do is find a machine learning model. Apple doesn’t have any restrictions here, per se, but does offer up some widely used models as a sort of ‘best practices’ for CoreML. But all models touted by Apple hint at a fundamental problem with CoreML, as well. Each model is meant for scene detection, which is how apps can scan images for faces, trees or other objects. As Apple states:

CoreML WWDC 2017[/caption] Machine learning is not a fad or distraction for major tech companies. It's been in use for quite some time, although tech giants such as Google have only recently made a big show of publicizing their efforts in it. Apple is finally letting its developer group tap into it via the new CoreML framework. Here’s how. CoreML is, as you may guess by the name, dedicated to machine learning. It’s positioned to serve as the go-to framework for machine learning on Apple platforms such as iOS (that’s why Apple branded it with the ‘core’ moniker). Still officially in beta, CoreML is positioned as something that will be ready for use with iOS 11. An important note: users don’t need new hardware for an app to take advantage of CoreML; it’s a framework, and not reliant on any specific hardware or on-device functionality. The first thing developers will want to do is find a machine learning model. Apple doesn’t have any restrictions here, per se, but does offer up some widely used models as a sort of ‘best practices’ for CoreML. But all models touted by Apple hint at a fundamental problem with CoreML, as well. Each model is meant for scene detection, which is how apps can scan images for faces, trees or other objects. As Apple states:

Supported features include face tracking, face detection, landmarks, text detection, rectangle detection, barcode detection, object tracking, and image registration.CoreML Vision doesn’t access machine learning models via an API. Instead, Apple has several classes for implementing the models.

VNImageRequestHandler is “an object that processes one or more image analysis requests pertaining to a single image,” for example. In lay-terms, it’s the request to scan an image for a particular feature, such as a face. Scanning multiple images will require the VNSequenceRequestHandler class. Within Vision, you can get quite detailed. Developers can scan for faces, but also facial landmarks like eyes or a mouth. In a crude way, this is how those apps that force a smile on a photographed face work; they find your mouth, then present a layer that ‘adds’ a smile. [caption id="attachment_142060" align="aligncenter" width="2494"]  CoreML Architecture WWDC 2017[/caption] CoreML Vision is deep, and will be attractive for simple-purpose apps. Developers who try to corral the entirety of this framework will have cumbersome codebases to support. An example: Apple has five classes dedicated to object detection and tracking, two for horizon detection, and five supporting superclasses for Vision. It’s a lot to take in, and this is all before we convert the model. While pulling your model into the app’s code is necessary, Apple also asks developers to convert it to a CoreML-friendly framework. It has a fairly easy-to-use conversion for most well-known models, but developers who use something not officially recognized may need to create their own conversion tool. Apple notes that developers will be “defining each layer of the model's architecture and its connectivity with other layers,” but only says they should “use the conversion tools provided by Core ML Tools as examples.” There’s no strong guidance there. If you need to create a Vision app that’s a bit more robust, CoreML has an API. It’s meant for custom workflows and “advanced use cases.” Vision is only one aspect of CoreML, though. Speech and text also play a big role, which is where Foundation comes into play. Foundation may have a lot of moving parts, but its Strings and Text structs and classes make an appearance in CoreML. Most notably,

CoreML Architecture WWDC 2017[/caption] CoreML Vision is deep, and will be attractive for simple-purpose apps. Developers who try to corral the entirety of this framework will have cumbersome codebases to support. An example: Apple has five classes dedicated to object detection and tracking, two for horizon detection, and five supporting superclasses for Vision. It’s a lot to take in, and this is all before we convert the model. While pulling your model into the app’s code is necessary, Apple also asks developers to convert it to a CoreML-friendly framework. It has a fairly easy-to-use conversion for most well-known models, but developers who use something not officially recognized may need to create their own conversion tool. Apple notes that developers will be “defining each layer of the model's architecture and its connectivity with other layers,” but only says they should “use the conversion tools provided by Core ML Tools as examples.” There’s no strong guidance there. If you need to create a Vision app that’s a bit more robust, CoreML has an API. It’s meant for custom workflows and “advanced use cases.” Vision is only one aspect of CoreML, though. Speech and text also play a big role, which is where Foundation comes into play. Foundation may have a lot of moving parts, but its Strings and Text structs and classes make an appearance in CoreML. Most notably, NSLinguisticTagger will help apps analyze speech and break it into readable chunks with localization. The third part of all this is the subtlest player. GameplayKit helps evaluate “learned decision trees” in CoreML. It’s basically the logic engine driving all of the Vision and Foundation modules. As the framework is optimized for on-device performance, it takes a strong team to make it work. Vision, Foundation and GameplayKit are the stars, while frameworks such as Accelerate and Basic neural network subroutines (BNNS) pick up some of the slack. Using CoreML isn’t going to be easy. It has a lot of moving parts, and there’s an exhaustive list of customizations that can be made. Still, if machine learning is something your app can take advantage of, it’s likely worth the trouble. CoreML is a part of Apple’s universe now, and it’s going to take an increasingly larger role as time goes on. Now’s the best time to get acquainted with it.