[caption id="attachment_6383" align="aligncenter" width="618"]  Buckets of fuel kept Peer1's servers up and operating as hurricane Sandy flooded lower Manhattan.[/caption] When hurricane Sandy knocked out the electricity in lower Manhattan, data-center operator Peer1 took extreme measures to keep its servers humming, assembling a bucket brigade that carried diesel fuel up several flights of stairs. For this installment of our “Surviving Sandy” interview series (we previously talked to CoreSite and IPR), we sat down with Ted Smith, senior vice president of operations for Peer1, who talked about the decisions made as the floodwaters rose, the main generators went offline, and the changes his company’s made in the aftermath of the storm. So, just for the record, you operate one data center in New York City, correct? That’s correct. One data center and a couple POPs (Points of Presence). Where is that data center located? 75 Broad St. And you don’t own the building, do you? No. We own two small data-center spaces within the building. And how large are those spaces? Oh, geez, I don’t know offhand. I’d say somewhere in the neighborhood of 5,000 square feet. Not large. And what [flood zone] was that in? Zone 1, Zone 2? I’m sorry, I don’t know offhand. It was on the south side of the island, directly affected by the hurricane. The New York City area obviously knew that Sandy was heading their way. Can you give me a sense of what preparations were made, and when those took place? We have facilities across Canada and the U.S., and a facility in Miami, so we’re real accustomed to preparing for storms, and kind of have established procedures for that. Generally, what we do is to make sure we have staff on site to ride out the storm, we obtain provisions for them, water and food, and make sure we have a place for them to rest, a cot, kind of make sure we have everything ready, flashlights, batteries, typical emergency supplies, and then anything else we might need to weather the storm. And that was what was done in New York. But we were obviously hit a bit harder than expected, and it got a bit crazy. Give me a sense for why things got out of hand. Was it a case of the storm surge rising higher than expected, or something else? Obviously it was a very major storm for New York, with very widespread damage across South Manhattan. The building itself had significant damage to its infrastructure, being an older building, an established building in New York, with a lot in common with other buildings in New York, with a lot of infrastructure in sub-floor basements. Those were flooded during the hurricane. All of our infrastructure that was located below the first floor was severely damaged and non-operational until the basements were able to be pumped out, which took several days. So did the water seep into the basement, or flow into the lobby and down the stairs? Well, I believe, if I remember correctly, there was four feet of water in the lobby, at the peak of the storm. So it just flowed downstairs? Yes, it was just pushed in from the storm. And it flooded the generators? In the basement of that building is the main generators, the transformers, the switch gear, and obviously the input from utility. And it also had the main storage tank for the generators. So, as the water began entering the building and flowing downstairs, walk me through the decisions you were making at that point in time. We’d already gone over to generator; when a storm approaches, you shift over from utility [power] and go to generator when interruptions of power occur, so you don’t go back and forth. When the water got to a point that it had flooded the infrastructure and the basement, we were then operating under the reserves the building had on the roof, and our own storage tanks. Literally, at that point we had to do calculations as to how long we could run. And we believed we had enough diesel fuel—between what is in the building, and in our tanks, to about 9 AM the following day. And our typical process for these sorts of situations, which we call a critical outage or a critical incident, we open a phone bridge and communicate status and decisions about what we’re going to do. We had made the decision that morning to go with a controlled shutdown, which means that notifying our customers that power outage is imminent, and asking them to shed load and turn off their systems gracefully so they aren’t damaged because of the loss of power. And it’s something we generally do in any situation where we believe there’s a strong probability we’ll have to shut down a facility. As we got closer and closer to the 9 o’clock hour, we found a little bit more fuel in the calculations, thought we could go a little bit longer. That hour turned into two hours, turned into four hours, and before the end of the day, customers that showed up because of the notices, helped [us] haul fuel up into our storage tank. So we were able to, hour by hour, get enough fuel to keep the system running. [Editor’s Note: Peer1 operated backup generators on the 17th floor.] It was my understanding, on the morning of Oct. 30 at about 10:30 AM, Fog Creek Software said that they had shut down. But if I understand you correctly, Peer1 didn’t shut down? We told the customers that we were going to shut down, and that they needed to turn off their systems. A lot of customers did turn off their systems, their servers, and we were able to find enough power, enough diesel fuel to keep the power on, and we notified them that we were hour-by-hour at that point. So a lot of them did send notices to their customers, and did turn off; some of them sent notices, and didn’t turn off, because they didn’t have to. I’m not certain with Fog Creek if they did turn off, or didn’t; I know they went down to the data center and jumped in and helped with the bucket brigade. So technically, you guys were never down, but you notified your customers that you could go down. Yes. The following day we did have to shed some load in one of our data center rooms, and that was because we didn’t have all of our cooling on our own infrastructure; it was on the building infrastructure, and the power had not come back up. And because some of the other data centers had begun to come online, on generator, some of the air cooling we were doing, just pulling air from outside, was no longer effective, because of the exhaust from the generators that were running. But we did shed a small number of customers in the exercise, but it was a very small number, a small percentage of the total. So you had generators in the basement, and fuel on the roof. Do I have the layout right? Right. The building supplies fuel to us, in that building, and they had a storage tank, not on the roof but on the 19th floor of the building or something like that. And we had our own storage tank, our own day tank, on our data center floor, which was on 17, if I remember correctly. So at any point was there a call to arms, asking customers to help get fuel into the building? So that’s the funny thing. You know the bucket brigade—it’s something I’ve never asked the team to do. If you think about what that was at that time, you’re talking about carrying fuel up 17 flights, in total darkness, throughout a whole evening. We had informed our data center manager that we were shutting down, but he kind of took on it himself to say, ‘Not on my watch.’ And he organized himself, got a temporary solution and then more customers jumped in. And at peak I think we had about 30 people helping. And that call really went out through social media and through calls to friends and family in the area. It wasn’t a coordinated effort from Peer1 to get people on site. We did get people on site, but that whole activity of keeping the data center online through manual efforts on carrying the fuel up was really the local team and customers that did it. Where did the fuel actually come from? I’m trying to remember. We did have fuel come from a local company; that’s where the first deliveries came from. And they were being delivered in 50-gallon drums and then carried up from there. It was locally sourced. So you were just pouring the fuel into buckets and carrying them upstairs? You saw the pictures, I’m sure. They literally had a fire line going, carry it up one stairs, had it to the next guy, and pass it along to the rest, in a variety of containers. And how long did that go on, would you say? That went on for, 48 to 72 hours? Until we could source the piping and the hose to get the diesel to get carried up. And how many of those employees were from Peer1, versus your customers? At most it would have been five Peer1 employees. People were trading in and out for sleep and rest. We have three permanent employees in the New York area, some contractors, five at most, so the rest—twenty-five—were customers and friends. And we were looking for a permanent source of fuel. Calling a variety of sources to get equipment up to our floor, calling to get diesel to the building, to their storage tank. Lots of coordinated effort: how do we get the basement pumped out, how do we get diesel fuel in place, and what that permanent source of power will come from. Was this something that you think you weren’t prepared for? No, I don’t think that anyone could have been prepared for a situation like this. This is a once-in-a-hundred-years storm. Now, as you said, with some learnings [taken] away from it, we had trouble with sourcing the equipment after the storm, because every company that we talked to one, didn’t know the status of their locations; two, didn’t know where their people were, or their people’s ability to get to their offices and help us with that equipment, and also, they were getting overwhelmed with other calls, too. So having those things sourced in advance is something that you definitely need to be prepared for. The learning there is when diesel isn’t available, how do you get diesel into your site and are you prepared to handle that in that critical situation. And where did you get the pumps to pump out the basement? Were those supplied by the city? I believe those were all supplied by the building. Building had several engineers on site, 24/7 as well, so their main focus was establishing their infrastructure in place and began pumping. I’m not sure if it was the city, or ConEd, or the building themselves, but that was all done in parallel. Tell me about getting the pumper trucks down to the site after the storm. How easy was it to maneuver? Was there debris? Any problems with local police? I can’t that answer that question specifically because I was not on site, but I know it wasn’t easy. For one, there was no power at all in southern Manhattan. From what I was told by Ryan Murphey, who was on site, it was pitch dark. You could see nothing without a flashlight or headlights from a car. And there were lots of vehicles around, so it was not easy to maneuver, to get into the space. Parking was tough. Cars had been moved out of parking garages, and it was a mess in south Manhattan. It was not easy to get those trucks down to the building. So you were looking for a fuel truck as well as a separate vehicle to pump it to the top of the building? The first step was [for] the truck to just deliver the fuel, to keep the bucket brigade going. The second was the pumping equipment and the piping to get [the fuel] up the stairwell into the building’s tanks. We started trying to source that the... morning of the 30th, and it took roughly 72 hours to get that in place, by the time were able to get it in place. So at the time you had the pumping truck in place, what happened? We also had a secondary generator, a roll-up, in the same time frame. We shifted to making sure to get off the installed generator and run on a rollup, and make sure we didn't fail and have a single generator on that site. And then we brought in as many people as we could to make sure we had at least one facilities person and two DCO people on site, 24-7. And what was the communications infrastructure like during this time? All of our communications infrastructure, all of our Internet connections, all that functioned throughout. Because you run dedicated connections between your data centers? We do, yeah. And then you were on generator power for how long? Up until a few days ago [as of Nov. 29]. We’re currently on temporary utility power. There’s some long lead times to replace the gear. And what does that mean, temporary utility power? It means—most of the equipment in the basement was destroyed. The water damage. The building has been able to source enough equipment to get us on utility, but there will have to be some major work to get us all finished. We’ll be switching between utility and generator on and off through the rest of the year. So we’re talking about, weeks and months to get back to “normal,” right? Yeah, weeks for sure. I haven’t had a chance to see Ryan and get the schedule—he’s in Atlanta next week to talk, but yeah, it’s a long term process to get back to usual. So have you done a post-mortem at this point in time? A little bit, a little bit. We have some actions we want to take, go forward. The biggest one is that Ryan will be defining an emergency response team, and training and preparing so that those folks are ready to leave on a moment’s notice. So if we have the threat of these things in the future, we can get them on site before the storm hits. Having people on site before the damage occurs is definitely critical, in this situation all the major airports are shut down, right after the storm. And having all the equipment sourced and ready prior to the storm is also very helpful. Because after it all it occurs it’s really hard to get people to help you. You said that you had facilities in Miami. Did you ever experience anything like Sandy there? No, we’ve had several hurricanes roll through south Florida, and some big storms. It’s a different environment there, since there’s a constant threat of hurricanes—the buildings there are different. Our building there is a Category 4 evacuation center, so the building is really designed to stand and hold up to really major storms, and it’s right by the airport so its not on the coast. It’s a bit of a different situation. In a place where you don’t have a storm like this, a once-in-a-hundred-year storm, the preparation and the infrastructure isn’t prepared for it. Tell me a little more about the emergency response team? What are their goals? What are they tasked to do? We have a very good process. First of all, we’re a very distributed company. We have large offices across North America, and the U.S. and we have teams that are spread out in multiple cities, where we don’t have a single situation where it will cripple us, from a resource or an employee’s perspective. But the idea is to have folks ready to go on a moment’s notice to each of our facilities. Each of our facilities is a little bit different, so to say exactly what they need for each facility is a little bit different. But the main thing is the folks that are going have the knowledge of our infrastructure and the willingness to put in long hours to get through these critical situations, so that either our local team can take break and go home and see family and take care of their own personal things, or we know we have really good coverage in those issues. Any other changes you guys are making as a result of Sandy? That’s the main one. It’s always good for our business to be in control of your infrastructure, as things move faster, but that’s just kind of a given, a normal business issue as well. But getting a little better on the emergency response team, sourcing spare and emergency gear is the other one. And having contracts available for critical infrastructure, whether that’s fuel, or electrical infrastructure, is also helpful. I saw Fog Creek moved its Trello software to Amazon’s AWS, and they had contingency plans in place to yank the servers and move them across the river, if needed. Have you had any customer feedback from Sandy, and did you lose any customers after the storm? (Editor’s Note: Fog Creek declined to comment.) I don’t think we’ve lost any customers. I think we’ve gained some customers from it. The bucket brigade story is a bit of a window in Peer1’s culture and value and execution. There was a little bit of frustration with having to shut down for the customers, and that notice of imminent outage. I don’t think we’ve lost any customers, and I know for a fact we’ve gained at least one. Image: Fog Creek Software

Buckets of fuel kept Peer1's servers up and operating as hurricane Sandy flooded lower Manhattan.[/caption] When hurricane Sandy knocked out the electricity in lower Manhattan, data-center operator Peer1 took extreme measures to keep its servers humming, assembling a bucket brigade that carried diesel fuel up several flights of stairs. For this installment of our “Surviving Sandy” interview series (we previously talked to CoreSite and IPR), we sat down with Ted Smith, senior vice president of operations for Peer1, who talked about the decisions made as the floodwaters rose, the main generators went offline, and the changes his company’s made in the aftermath of the storm. So, just for the record, you operate one data center in New York City, correct? That’s correct. One data center and a couple POPs (Points of Presence). Where is that data center located? 75 Broad St. And you don’t own the building, do you? No. We own two small data-center spaces within the building. And how large are those spaces? Oh, geez, I don’t know offhand. I’d say somewhere in the neighborhood of 5,000 square feet. Not large. And what [flood zone] was that in? Zone 1, Zone 2? I’m sorry, I don’t know offhand. It was on the south side of the island, directly affected by the hurricane. The New York City area obviously knew that Sandy was heading their way. Can you give me a sense of what preparations were made, and when those took place? We have facilities across Canada and the U.S., and a facility in Miami, so we’re real accustomed to preparing for storms, and kind of have established procedures for that. Generally, what we do is to make sure we have staff on site to ride out the storm, we obtain provisions for them, water and food, and make sure we have a place for them to rest, a cot, kind of make sure we have everything ready, flashlights, batteries, typical emergency supplies, and then anything else we might need to weather the storm. And that was what was done in New York. But we were obviously hit a bit harder than expected, and it got a bit crazy. Give me a sense for why things got out of hand. Was it a case of the storm surge rising higher than expected, or something else? Obviously it was a very major storm for New York, with very widespread damage across South Manhattan. The building itself had significant damage to its infrastructure, being an older building, an established building in New York, with a lot in common with other buildings in New York, with a lot of infrastructure in sub-floor basements. Those were flooded during the hurricane. All of our infrastructure that was located below the first floor was severely damaged and non-operational until the basements were able to be pumped out, which took several days. So did the water seep into the basement, or flow into the lobby and down the stairs? Well, I believe, if I remember correctly, there was four feet of water in the lobby, at the peak of the storm. So it just flowed downstairs? Yes, it was just pushed in from the storm. And it flooded the generators? In the basement of that building is the main generators, the transformers, the switch gear, and obviously the input from utility. And it also had the main storage tank for the generators. So, as the water began entering the building and flowing downstairs, walk me through the decisions you were making at that point in time. We’d already gone over to generator; when a storm approaches, you shift over from utility [power] and go to generator when interruptions of power occur, so you don’t go back and forth. When the water got to a point that it had flooded the infrastructure and the basement, we were then operating under the reserves the building had on the roof, and our own storage tanks. Literally, at that point we had to do calculations as to how long we could run. And we believed we had enough diesel fuel—between what is in the building, and in our tanks, to about 9 AM the following day. And our typical process for these sorts of situations, which we call a critical outage or a critical incident, we open a phone bridge and communicate status and decisions about what we’re going to do. We had made the decision that morning to go with a controlled shutdown, which means that notifying our customers that power outage is imminent, and asking them to shed load and turn off their systems gracefully so they aren’t damaged because of the loss of power. And it’s something we generally do in any situation where we believe there’s a strong probability we’ll have to shut down a facility. As we got closer and closer to the 9 o’clock hour, we found a little bit more fuel in the calculations, thought we could go a little bit longer. That hour turned into two hours, turned into four hours, and before the end of the day, customers that showed up because of the notices, helped [us] haul fuel up into our storage tank. So we were able to, hour by hour, get enough fuel to keep the system running. [Editor’s Note: Peer1 operated backup generators on the 17th floor.] It was my understanding, on the morning of Oct. 30 at about 10:30 AM, Fog Creek Software said that they had shut down. But if I understand you correctly, Peer1 didn’t shut down? We told the customers that we were going to shut down, and that they needed to turn off their systems. A lot of customers did turn off their systems, their servers, and we were able to find enough power, enough diesel fuel to keep the power on, and we notified them that we were hour-by-hour at that point. So a lot of them did send notices to their customers, and did turn off; some of them sent notices, and didn’t turn off, because they didn’t have to. I’m not certain with Fog Creek if they did turn off, or didn’t; I know they went down to the data center and jumped in and helped with the bucket brigade. So technically, you guys were never down, but you notified your customers that you could go down. Yes. The following day we did have to shed some load in one of our data center rooms, and that was because we didn’t have all of our cooling on our own infrastructure; it was on the building infrastructure, and the power had not come back up. And because some of the other data centers had begun to come online, on generator, some of the air cooling we were doing, just pulling air from outside, was no longer effective, because of the exhaust from the generators that were running. But we did shed a small number of customers in the exercise, but it was a very small number, a small percentage of the total. So you had generators in the basement, and fuel on the roof. Do I have the layout right? Right. The building supplies fuel to us, in that building, and they had a storage tank, not on the roof but on the 19th floor of the building or something like that. And we had our own storage tank, our own day tank, on our data center floor, which was on 17, if I remember correctly. So at any point was there a call to arms, asking customers to help get fuel into the building? So that’s the funny thing. You know the bucket brigade—it’s something I’ve never asked the team to do. If you think about what that was at that time, you’re talking about carrying fuel up 17 flights, in total darkness, throughout a whole evening. We had informed our data center manager that we were shutting down, but he kind of took on it himself to say, ‘Not on my watch.’ And he organized himself, got a temporary solution and then more customers jumped in. And at peak I think we had about 30 people helping. And that call really went out through social media and through calls to friends and family in the area. It wasn’t a coordinated effort from Peer1 to get people on site. We did get people on site, but that whole activity of keeping the data center online through manual efforts on carrying the fuel up was really the local team and customers that did it. Where did the fuel actually come from? I’m trying to remember. We did have fuel come from a local company; that’s where the first deliveries came from. And they were being delivered in 50-gallon drums and then carried up from there. It was locally sourced. So you were just pouring the fuel into buckets and carrying them upstairs? You saw the pictures, I’m sure. They literally had a fire line going, carry it up one stairs, had it to the next guy, and pass it along to the rest, in a variety of containers. And how long did that go on, would you say? That went on for, 48 to 72 hours? Until we could source the piping and the hose to get the diesel to get carried up. And how many of those employees were from Peer1, versus your customers? At most it would have been five Peer1 employees. People were trading in and out for sleep and rest. We have three permanent employees in the New York area, some contractors, five at most, so the rest—twenty-five—were customers and friends. And we were looking for a permanent source of fuel. Calling a variety of sources to get equipment up to our floor, calling to get diesel to the building, to their storage tank. Lots of coordinated effort: how do we get the basement pumped out, how do we get diesel fuel in place, and what that permanent source of power will come from. Was this something that you think you weren’t prepared for? No, I don’t think that anyone could have been prepared for a situation like this. This is a once-in-a-hundred-years storm. Now, as you said, with some learnings [taken] away from it, we had trouble with sourcing the equipment after the storm, because every company that we talked to one, didn’t know the status of their locations; two, didn’t know where their people were, or their people’s ability to get to their offices and help us with that equipment, and also, they were getting overwhelmed with other calls, too. So having those things sourced in advance is something that you definitely need to be prepared for. The learning there is when diesel isn’t available, how do you get diesel into your site and are you prepared to handle that in that critical situation. And where did you get the pumps to pump out the basement? Were those supplied by the city? I believe those were all supplied by the building. Building had several engineers on site, 24/7 as well, so their main focus was establishing their infrastructure in place and began pumping. I’m not sure if it was the city, or ConEd, or the building themselves, but that was all done in parallel. Tell me about getting the pumper trucks down to the site after the storm. How easy was it to maneuver? Was there debris? Any problems with local police? I can’t that answer that question specifically because I was not on site, but I know it wasn’t easy. For one, there was no power at all in southern Manhattan. From what I was told by Ryan Murphey, who was on site, it was pitch dark. You could see nothing without a flashlight or headlights from a car. And there were lots of vehicles around, so it was not easy to maneuver, to get into the space. Parking was tough. Cars had been moved out of parking garages, and it was a mess in south Manhattan. It was not easy to get those trucks down to the building. So you were looking for a fuel truck as well as a separate vehicle to pump it to the top of the building? The first step was [for] the truck to just deliver the fuel, to keep the bucket brigade going. The second was the pumping equipment and the piping to get [the fuel] up the stairwell into the building’s tanks. We started trying to source that the... morning of the 30th, and it took roughly 72 hours to get that in place, by the time were able to get it in place. So at the time you had the pumping truck in place, what happened? We also had a secondary generator, a roll-up, in the same time frame. We shifted to making sure to get off the installed generator and run on a rollup, and make sure we didn't fail and have a single generator on that site. And then we brought in as many people as we could to make sure we had at least one facilities person and two DCO people on site, 24-7. And what was the communications infrastructure like during this time? All of our communications infrastructure, all of our Internet connections, all that functioned throughout. Because you run dedicated connections between your data centers? We do, yeah. And then you were on generator power for how long? Up until a few days ago [as of Nov. 29]. We’re currently on temporary utility power. There’s some long lead times to replace the gear. And what does that mean, temporary utility power? It means—most of the equipment in the basement was destroyed. The water damage. The building has been able to source enough equipment to get us on utility, but there will have to be some major work to get us all finished. We’ll be switching between utility and generator on and off through the rest of the year. So we’re talking about, weeks and months to get back to “normal,” right? Yeah, weeks for sure. I haven’t had a chance to see Ryan and get the schedule—he’s in Atlanta next week to talk, but yeah, it’s a long term process to get back to usual. So have you done a post-mortem at this point in time? A little bit, a little bit. We have some actions we want to take, go forward. The biggest one is that Ryan will be defining an emergency response team, and training and preparing so that those folks are ready to leave on a moment’s notice. So if we have the threat of these things in the future, we can get them on site before the storm hits. Having people on site before the damage occurs is definitely critical, in this situation all the major airports are shut down, right after the storm. And having all the equipment sourced and ready prior to the storm is also very helpful. Because after it all it occurs it’s really hard to get people to help you. You said that you had facilities in Miami. Did you ever experience anything like Sandy there? No, we’ve had several hurricanes roll through south Florida, and some big storms. It’s a different environment there, since there’s a constant threat of hurricanes—the buildings there are different. Our building there is a Category 4 evacuation center, so the building is really designed to stand and hold up to really major storms, and it’s right by the airport so its not on the coast. It’s a bit of a different situation. In a place where you don’t have a storm like this, a once-in-a-hundred-year storm, the preparation and the infrastructure isn’t prepared for it. Tell me a little more about the emergency response team? What are their goals? What are they tasked to do? We have a very good process. First of all, we’re a very distributed company. We have large offices across North America, and the U.S. and we have teams that are spread out in multiple cities, where we don’t have a single situation where it will cripple us, from a resource or an employee’s perspective. But the idea is to have folks ready to go on a moment’s notice to each of our facilities. Each of our facilities is a little bit different, so to say exactly what they need for each facility is a little bit different. But the main thing is the folks that are going have the knowledge of our infrastructure and the willingness to put in long hours to get through these critical situations, so that either our local team can take break and go home and see family and take care of their own personal things, or we know we have really good coverage in those issues. Any other changes you guys are making as a result of Sandy? That’s the main one. It’s always good for our business to be in control of your infrastructure, as things move faster, but that’s just kind of a given, a normal business issue as well. But getting a little better on the emergency response team, sourcing spare and emergency gear is the other one. And having contracts available for critical infrastructure, whether that’s fuel, or electrical infrastructure, is also helpful. I saw Fog Creek moved its Trello software to Amazon’s AWS, and they had contingency plans in place to yank the servers and move them across the river, if needed. Have you had any customer feedback from Sandy, and did you lose any customers after the storm? (Editor’s Note: Fog Creek declined to comment.) I don’t think we’ve lost any customers. I think we’ve gained some customers from it. The bucket brigade story is a bit of a window in Peer1’s culture and value and execution. There was a little bit of frustration with having to shut down for the customers, and that notice of imminent outage. I don’t think we’ve lost any customers, and I know for a fact we’ve gained at least one. Image: Fog Creek Software

Dice Staff

Dice Staff is the editorial team behind Dice, a leading tech career hub with more than 30 years of experience supporting both job seekers and employers. With decades of experience, the team offers insights on job search, career growth, talent acquisition, artificial intelligence, and retention that help everyone thrive in today’s competitive tech landscape.

Related Articles

-

NYC Data Centers Struggle to Recover After Sandy

[caption id="attachment_5593" align="aligncenter" width="618"] ConEd outages as of 10:30 AM EST Oct. 31.[/caption] Problems in New York’s data centers persisted through Wednesday morning, with ho… -

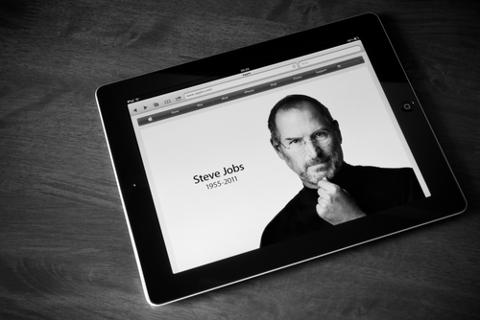

How One Apple Employee Survived a Steve Jobs Product Review

In the popular conception, the late Steve Jobs is a megalomaniacal jerk who screamed, bullied, and pushed until he got whatever he wanted. But according to Don Melton, who started the Safari and… -

5 Tips for Surviving Your First Year in Tech

Whether you're a recent computer-science graduate or a brave career-changer just out of a coding bootcamp, navigating your first year in tech can be challenging. “There was so much to learn durin…