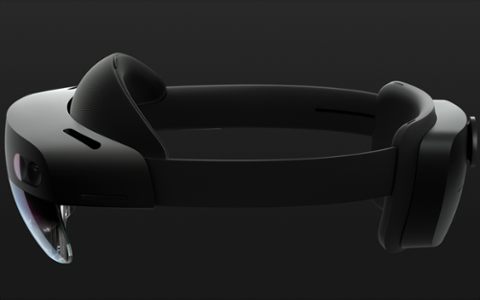

[caption id="attachment_142582" align="aligncenter" width="3150"]  HoloLens HPU 2.0[/caption] At its Build 2017 conference, Microsoft tried to make its HoloLens augmented-reality (AR) headset more intriguing, saying AR and virtual reality were essentially different points on the same 'mixed reality' spectrum. Now the company is offering more insight into its vision for HoloLens, which will have the ability to use deep neural networks. HoloLens already has its own system-on-a-chip processor, which Microsoft calls the HoloLens Processing Unit (HPU). The only version available today is found in existing HoloLens headsets. HPU Version 2.0 will have an augmented reality co-processor incorporated to take advantage of Deep Neural Networks (DNN). The unique twist is that HPU v2 won’t be limited to cloud-based neural networks like most other platforms. Instead, the chipset will allow native implementation of neural networks. Microsoft says: “The chip supports a wide variety of layer types, fully programmable by us.” It also notes that the coprocessor "is designed to work in the next version of HoloLens, running continuously, off the HoloLens battery.” All told, it sounds as if Microsoft is putting a fully native neural network inside its headset that can be customized. You might ask why that would be necessary. For general use, it probably isn’t; but for commercial or enterprise use, this presents a wider array of possibilities. An example: HoloLens may be used to help a mechanic diagnose and repair an engine not yet in a production vehicle, which could link to a native database on the headset rather than link to a server. Depending on connectivity and a client’s eagerness to be secretive, native could present a better choice. A neural network could help a mechanic recognize strange issues. In the name of trade secrets, the manufacturer may find that keeping such problems and diagnoses native is preferable. A move to native neural networks may distinguish HoloLens from its upcoming competition. Rivals such as Google and Apple are moving in their own direction. Google’s VR platform, Daydream, uses a headset as a cradle for an accompanying Android smartphone, while Apple is currently weaving AR into iOS 11. It’s also believed Apple is working on an AR headset, although the popular theory is that it will rely on an iPhone for processing data; Core ML, a machine learning toolkit, is also available for iOS developers this Fall. Google also has the Google Glass augmented-reality headset. Recently re-introduced as an enterprise product, Glass has enterprise partners already using it. HoloLens may be trying to compete on that business-centric front, where a full visor should be more useful than a small viewfinder. (If Apple’s intent really is an AR headset, trying to encourage people to buy a bulky, expensive carriage for daily use simply won’t work.) In its blog post announcing the new HPU, Microsoft states: “We’re in the business of making untethered mixed reality devices.” It’s hard to tell if native neural networking will make HoloLens more attractive, but it’s perhaps a move in the right direction.

HoloLens HPU 2.0[/caption] At its Build 2017 conference, Microsoft tried to make its HoloLens augmented-reality (AR) headset more intriguing, saying AR and virtual reality were essentially different points on the same 'mixed reality' spectrum. Now the company is offering more insight into its vision for HoloLens, which will have the ability to use deep neural networks. HoloLens already has its own system-on-a-chip processor, which Microsoft calls the HoloLens Processing Unit (HPU). The only version available today is found in existing HoloLens headsets. HPU Version 2.0 will have an augmented reality co-processor incorporated to take advantage of Deep Neural Networks (DNN). The unique twist is that HPU v2 won’t be limited to cloud-based neural networks like most other platforms. Instead, the chipset will allow native implementation of neural networks. Microsoft says: “The chip supports a wide variety of layer types, fully programmable by us.” It also notes that the coprocessor "is designed to work in the next version of HoloLens, running continuously, off the HoloLens battery.” All told, it sounds as if Microsoft is putting a fully native neural network inside its headset that can be customized. You might ask why that would be necessary. For general use, it probably isn’t; but for commercial or enterprise use, this presents a wider array of possibilities. An example: HoloLens may be used to help a mechanic diagnose and repair an engine not yet in a production vehicle, which could link to a native database on the headset rather than link to a server. Depending on connectivity and a client’s eagerness to be secretive, native could present a better choice. A neural network could help a mechanic recognize strange issues. In the name of trade secrets, the manufacturer may find that keeping such problems and diagnoses native is preferable. A move to native neural networks may distinguish HoloLens from its upcoming competition. Rivals such as Google and Apple are moving in their own direction. Google’s VR platform, Daydream, uses a headset as a cradle for an accompanying Android smartphone, while Apple is currently weaving AR into iOS 11. It’s also believed Apple is working on an AR headset, although the popular theory is that it will rely on an iPhone for processing data; Core ML, a machine learning toolkit, is also available for iOS developers this Fall. Google also has the Google Glass augmented-reality headset. Recently re-introduced as an enterprise product, Glass has enterprise partners already using it. HoloLens may be trying to compete on that business-centric front, where a full visor should be more useful than a small viewfinder. (If Apple’s intent really is an AR headset, trying to encourage people to buy a bulky, expensive carriage for daily use simply won’t work.) In its blog post announcing the new HPU, Microsoft states: “We’re in the business of making untethered mixed reality devices.” It’s hard to tell if native neural networking will make HoloLens more attractive, but it’s perhaps a move in the right direction.