Data center system vendors are making a lot of fuss about launching their latest generation of gear targeted at cloud, big data, and hyperscale datacenters in general. The sales collateral alone makes for fairly dense reading. But what are they trying to sell, and why? Marketing and sales people visualize their customers’ decision-making process as a purchase funnel: awareness (“I don’t care what you say, just spell my name correctly”), interest (research, consideration), desire (engagement, conversion, preference), and action (in this case, purchase). The trick, as in any competitive arena, is to differentiate, to be noticed, to stand out from your competition. In order to stand out from your competition in the later stages of the purchase funnel, you have to provide a noticeably better value along some axis of performance. In order to create a new category of products, you have to go even farther. Andy Grove used a metric of 10x improvement to describe how much better a product had to be to cause a strategic inflection point in an industry, what we now call disruptive innovation. Given the massive investments vendors are directing at inventing new hyperscale infrastructure, let’s take a look at how they stack up against each other and if they really are disruptive products.

Data center system vendors are making a lot of fuss about launching their latest generation of gear targeted at cloud, big data, and hyperscale datacenters in general. The sales collateral alone makes for fairly dense reading. But what are they trying to sell, and why? Marketing and sales people visualize their customers’ decision-making process as a purchase funnel: awareness (“I don’t care what you say, just spell my name correctly”), interest (research, consideration), desire (engagement, conversion, preference), and action (in this case, purchase). The trick, as in any competitive arena, is to differentiate, to be noticed, to stand out from your competition. In order to stand out from your competition in the later stages of the purchase funnel, you have to provide a noticeably better value along some axis of performance. In order to create a new category of products, you have to go even farther. Andy Grove used a metric of 10x improvement to describe how much better a product had to be to cause a strategic inflection point in an industry, what we now call disruptive innovation. Given the massive investments vendors are directing at inventing new hyperscale infrastructure, let’s take a look at how they stack up against each other and if they really are disruptive products.

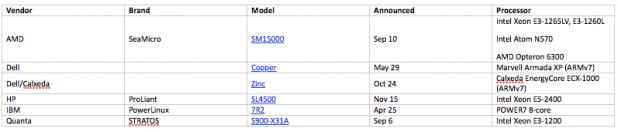

The Contestants–Big Data

Focusing in on the market du jour…  HP announced their Project Moonshot “Gemini” system in June, but there are no public specs to include in this comparison. Their beauty shots look very interesting, though.

HP announced their Project Moonshot “Gemini” system in June, but there are no public specs to include in this comparison. Their beauty shots look very interesting, though.

Claims

After filtering through the product briefs, specs, and white papers, a few product attributes start to percolate to the top of the list as being important to big data and hyperscale: Huge amounts of DAS (Direct Attached Storage): Each of these systems is aiming to stuff as much mass storage as close to each server node as it can manage. But it’s not clear that they have a limit in mind. Nor are they comparing to each other: they're comparing their storage capacity to traditional rack architectures. Fabric bandwidth within the compute plus storage complex: This is terra incognito. They tout their fabrics as a competitive advantage, but there are no direct ties back to workload performance. At this early stage of in-rack network fabric evolution, the proprietary fabric architectures are effectively black boxes. Network bandwidth into the compute plus storage complex: This is also a bit dicey for comparisons, in that the size of their compute plus storage gets complicated very dramatically. It’s easy to see that a couple of 10GbE pipes into a bunch of processing nodes simplify cabling, eliminate the need for in-rack switches and maybe the top of rack switch, but there’s not much clarity about whether bandwidths and latencies are matched to a particular workload. Performance per watt, per dollar, per rack U factor, and combinations of all three: Even if we can measure workload performance across a rack, taking into account node performance, fabric performance, the impact of the storage hierarchy and storage capacities available, and network performance to/from the datacenter network backbone, we're still not measuring the impact of power efficiency for these workloads on a meaningful scale. Nor are we making statements about compute density for that power efficiency. Similarly, both the capital expense of deploying this new infrastructure and operational expenses not related to electrical power (i.e.,system management) are not taken into account by performance metrics, though they are mentioned as advantages in most vendors’ marketing literature. “Performance” for big data and hyperscale systems is not very well defined. What is clear is that performance has transcended individual server node specs and thread-level performance; the sum of the performance of many individual server nodes is now dependent on many external factors.

Benchmarks?

The state of the industry today is that there are no synthetic or standardized performance benchmarks that are meaningful to most big data customers. They have to “try before buy”–either a vendor builds a cluster that customers can access to test their workloads, or customers must invest in a nominal amount of equipment to test in-house. Either way the testing scale is small and the customer must figure out how to scale their trial results from a small test-bed to the equivalent of a real-world deployment. It is not for the faint of heart. I believe there is awareness in the industry. These new solutions are receiving a lot of press attention and hype. The largest big data and hyperscale customers are driven to find more optimal solutions for these new workloads, and have strong economic incentive to do so. Smaller customers are waiting for use cases and clear signals from those large market leaders. Looking a little closer at potential big data workloads mentioned in vendors' promotional material, Hadoop and Cassandra stand out. These are not workloads; they fall into the same category as virtualization, in that they are frameworks that support workloads. There are tuning utilities and high-level synthetic benchmarks to ensure that these frameworks are functioning properly, and they provide useful hints to tune the framework's baseline performance. However, at some point a NoSQL database has to be filled with workload-specific data, and that data varies wildly between workloads with significant impact to workload performance. The same is true for analytics frameworks like Hadoop. Current synthetic benchmarks are not reliable indicators of behavior for a wide range of workloads. And these tuning utilities and early benchmarks do not measure performance per watt, dollar, or U factor. The other complicating factor is that hyperscale datacenter operators today typically have source-code access to all of the OS, frameworks, and workloads they run. They've optimized their code over the last few years, so what they run isn't quite the standard open source code they started with. They do need a standard method of comparing new gear as their choices proliferate. And in one major area they're taking somewhat of a step backward—most of the systems I included above ship with a vendor specific OS and that OS cannot be separated from the hardware. It integrates a vendor's in-chassis network fabric drivers. An operator can't re-image the product with their in-house OS; that's simply not a choice. And that’s affecting how they measure performance within their datacenter and how they select systems for future purchase consideration. Customers are getting bogged down in the interest phase–there isn't much variation in the language each vendor uses to describe their solutions. Traditional specs and benchmarks are not useful anymore. No one can claim a clear “10x” improvement over their competition because there are no clear comparisons between solutions. How can customers invest in a disruptive solution when vendors can’t clearly articulate their disruptive advantage and customers must go through extraordinary effort to measure the disruptive advantage for them? Until the industry can rally behind a set of meaningful benchmarks, I’ll argue that there will be no way for superior solutions to objectively differentiate themselves from the rest of the pack. Every customer win will be the result of a time consuming, costly, custom testing process. The current slow design-in process is an inhibitor to market growth for all of these new architectures–it’s not in anyone’s interest.